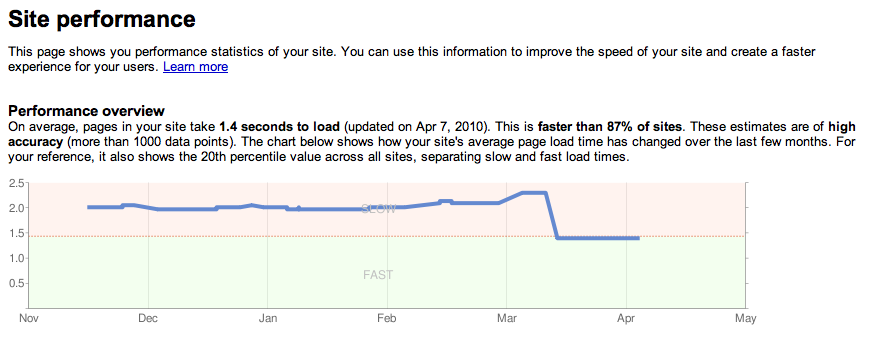

Google announced that a website’s speed is now one of the many signal’s used to determine site ranking in Google results. Google in their blog post suggests a couple of tools with which you can test your site speed which they define as how quickly a website responds to web requests including the Webmaster Tools Labs Site Performance app (Ironically, some of the speed optimizations that app will suggest revolve around minimizing DNS lookups caused by external resources like Google’s own Analytics tracker script). Google Code Speed offers more resources on how to customize web speed and performance.

Who Is On The Top

On the surface this signal seems to make sense. In the hypothetical case where two sites are of equal quality in all points but one is slower, then it’s better for the user if they are served with the faster site on top of the ranking, It will save searchers time and avoid frustration. Then the real question is how strong this signal should be factored in the overall ranking mix because in the opposite hypothetical case where one site is faster but delivers slightly bad quality of content, and another is three seconds slower but delivers great quality, users wouldn’t want to be served the faster site on top. Google suggests that the speed signal is not a very strong one, It’s one of the ‘200 signals‘ affecting fewer than 1% of search queries, Googles’ Matt Cutts says

you don’t need to panic.

There are webmasters who use the approach of preloading the website data. This procedure is normally used in image related websites . e.g. a user opens one image and the next in the gallery will be preloaded so that the user will not have to wait for the next image and so on. But this technique is considered as “Slow page loading” in the Google speed test therefore doing a bad impact on the overall site ranking. In reality although the page is not slow at all or maybe we can expect the google to be wise enough to understand such techniques in the future. Glad there are other analytics & metric solutions which are competing with the monopoly of Google, Analytic tools requires a deep understanding of the needs and desires of website owners and data should always be presented to users which is easy to understand and implement further on the provided data.

As a side effect, Google’s announcement adds a more constructive field to SEO: optimizing a client site loading speed. SEO in a way was always about usability and accessibility and site quality because unless you resort to spamming, improving your site quality should be one of the most important parts of getting more links to it, which increases your ranking. A part of a site quality was always its serving speed. Google’s new statements just make this issue within the quality mix more explicit. One issue I wonder about though: if a site slowness were already causing it to get less backlinks due to its resulting lower quality, then wouldn’t it now be penalized twice by Google? once by the backlinks count signal, and once by the site speed signal? If we again take the hypothetical case of two identical sites where one is slower though then wouldn’t the faster of the two sites already have a higher PageRank because people are more likely to link to it?

So we can say in the end that “A slower website will have less back-links making it rank lower and lower ” that makes the Google PR system a bit unjust as it is driving two aspects for ranking the site which are inter linked.

There are many possibilities why Google would like to make speed as a ranking signal. They want the site owners to improve there website speeds OR may be Google has made a decision to push sites in order to increase average internet speed, as an editorial judgment. This does raise some concerns: Even if we agree that faster internet is good, should Google push this just because they can? If yes, how about pushing other issues that are considered good, say whether a company running a web site has a good recycling program? Is that Google’s job? Secondly, are there other ulterior motives for this, pushing people in the direction of Google products such as their DNS optimizations and other web site software that will over time lock them in?

If we consider such options only then speed as a ranking factor makes some sense after all we know that Google is getting devilish with its intentions.

Thought came to my mind …

As Fadi is running Hackology on his servers at home which face problems like slower net and load shedding and more DNS hop’s on Ip updating making this site slow means it will go more down in ranking ?

🙁

thats what came to my mind =/

well ,google is starting to suck.they 1st giving us gud services and aftr it will make such criteria as i shared above which will make ppl pay for there services to get on top.i thnk its time we leave google for gud.bt nt that simple i guess

i told you a long time back that Google was getting evil =/

even in the google’s official blog post, people were commenting mostly against this “feature”

Terrific work! This is the type of information that should be shared around the web. Shame on the search engines for not positioning this post higher!